Find out who created the first computer and what was the history of this extremely important device for the technological progress of mankind.

Who created the first computer: A Comprehensive Journey Through History

The origins of computing delve deep into the annals of human innovation, tracing back to the ingenious concepts of visionaries like Charles Babbage and Dr. John Vincent Atanasoff.

While Babbage’s mechanical difference and analytical engines laid the groundwork for conceptualizing computational devices, it was Atanasoff and his collaborator Clifford Berry who birthed the first electronic computer, the Atanasoff-Berry Computer (ABC), in 1942.

The Crucial Role of World War II

The tumultuous era of World War II acted as a crucible for technological advancement, propelling the development of computing machines like the ENIAC and Colossus. These machines were instrumental in pivotal tasks such as artillery calculations and code-breaking. By 1951, the UNIVAC emerged as the first commercial computer, commissioned for the U.S. Census Bureau, marking a significant milestone in the evolution of computing technology.

Pioneering the Era of Personal Computers

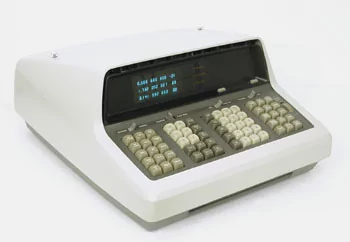

The evolution of computing further unfolded with the advent of personal computers, spearheaded by prototypes like Hewlett-Packard’s HP 9100A scientific calculator and the groundbreaking Apple series. IBM’s iconic 5150 personal computer, introduced in 1981, revolutionized computing accessibility and became a ubiquitous presence in businesses worldwide.

Defining the Concept of Computing

Before delving into the historical narrative who created the first computer, it’s crucial to establish a clear understanding of what constitutes a computer. While the term “computer” has evolved over time, it fundamentally refers to a device capable of processing and manipulating data to perform various tasks.

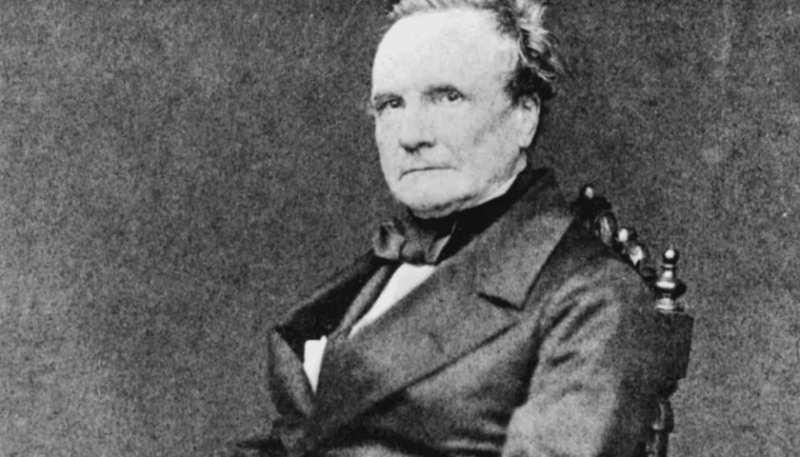

Unraveling the Legacy of Charles Babbage

The seminal contributions of Charles Babbage stand as a cornerstone in the history of computing. Often hailed as the father of computing, Babbage’s visionary endeavors revolutionized the concept of mechanical computation. His analytical engine, conceived in the early 19th century, laid the conceptual framework for modern computers with its central processing unit and memory architecture.

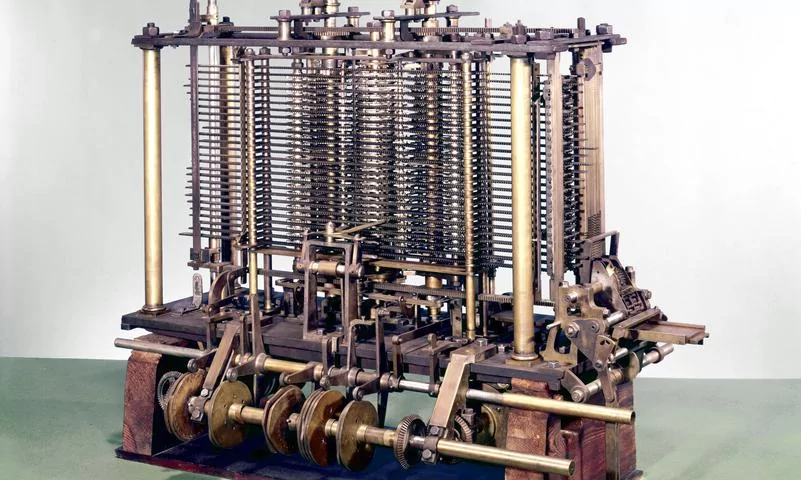

Babbage’s Difference Engine: A Paradigm Shift in Computation

Babbage’s journey towards computational innovation began with his conceptualization of the difference engine—a mechanical device designed to perform complex mathematical calculations. Inspired by the Industrial Revolution’s ethos of efficiency and automation, Babbage sought to mechanize the laborious process of mathematical computation, envisioning a device that could streamline tasks with unprecedented precision.

The Analytical Engine: Birth of the First Computer

However, Babbage’s ambitions transcended the confines of the difference engine, leading him to conceive an even grander vision—the analytical engine. This revolutionary concept heralded the dawn of the first true computer, equipped with a central processing unit and memory akin to modern computing architectures. Despite facing funding setbacks from the British government, Babbage’s analytical engine laid the groundwork for subsequent advancements in computing technology.

Ada Lovelace: A Trailblazer in Computer Programming

The narrative of computing history who created the first computer is incomplete without acknowledging the pivotal contributions of Augusta Ada Byron, commonly known as Ada Lovelace. As a visionary mathematician and collaborator of Charles Babbage, Lovelace played a crucial role in elucidating the potential of the analytical engine. Her groundbreaking insights into algorithmic computation earned her the distinction of being the world’s first computer programmer, cementing her legacy as a pioneering figure in computing history.

Dr. John Vincent Atanasoff: A Pioneer in Electronic Computing

While Babbage’s contributions shaped the theoretical landscape of computing, it was Dr. John Vincent Atanasoff who pioneered the realm of electronic computing. Fueled by a desire to augment computational capabilities, Atanasoff embarked on a quest to build an electronic device capable of revolutionizing mathematical computation. The result was the Atanasoff-Berry Computer (ABC), a groundbreaking achievement that laid the foundation for modern electronic computing.

Military Applications and Technological Advancements

The exigencies of World War II propelled the rapid development of computing technology, leading to the creation of specialized machines like the ENIAC and Colossus. These formidable devices played instrumental roles in military operations, facilitating tasks ranging from artillery calculations to cryptographic decryption. Additionally, innovations like the EDSAC and Whirlwind I underscored the versatility of electronic computing, paving the way for multifunctional computing systems.

Commercialization of Computing: UNIVAC and Beyond

The commercialization of computing technology ushered in a new era of accessibility and innovation. The UNIVAC, heralded as the world’s first commercial computer, symbolized a paradigm shift in computing accessibility, offering unprecedented computational power to governmental and commercial entities alike. Subsequent advancements like the IBM 305 RAMAC further propelled the evolution of computing, introducing groundbreaking features like random access memory and hard disk storage.

The Advent of Personal Computing: Hewlett-Packard, Apple, and IBM

The 1960s and 1970s witnessed a proliferation of personal computing initiatives spearheaded by industry titans like Hewlett-Packard, Apple, and IBM. Hewlett-Packard’s HP 9100A scientific calculator and Apple’s pioneering Apple I and II series heralded a new era of computing accessibility, catering to the burgeoning demands of individual users. IBM’s trailblazing 5150 personal computer further democratized computing, transforming it from a niche technology into a household staple.

Conclusion: Charting the Course of Technological Evolution

In conclusion, the history of computing epitomizes a saga of relentless innovation, collaborative ingenuity, and transformative vision. From the conceptual musings of Charles Babbage to the electronic breakthroughs of Dr. John Vincent Atanasoff, each milestone in computing history has left an indelible mark on the fabric of human progress.

As we traverse the intricate tapestry of computing evolution, we are reminded of the collective endeavor that has propelled humanity towards the frontiers of technological excellence. Indeed, the story of computing is not merely a narrative of individual achievement but a testament to the boundless potential of human intelligence and collaborative endeavor.